Find and Remove Duplicates in Google Sheets: A Practical Guide

Learn practical, step-by-step methods to identify and remove duplicates in Google Sheets using conditional formatting, COUNTIF, UNIQUE, and more. Build reliable data with best practices and real-world examples.

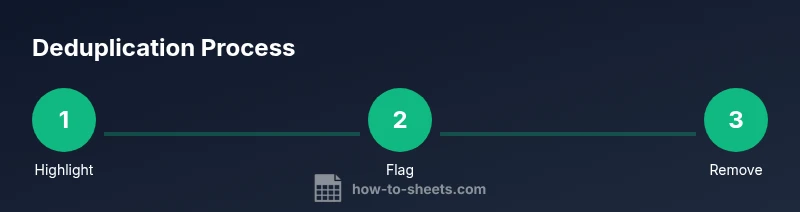

You will learn how to locate and remove duplicates in Google Sheets for cleaner, more reliable data. Key methods include conditional formatting to highlight duplicates, COUNTIF formulas to flag repeats, and the built‑in Remove duplicates tool for quick cleanup. This guide also shows how to preserve original data through backups and plan a reusable workflow.

Understanding google sheets duplicate

In Google Sheets, a duplicate occurs when the same value appears more than once within a selected range, column, or across multiple columns. The phrase google sheets duplicate is a common search term for people trying to clean data. For many students and professionals, duplicates distort summaries, skew pivot tables, and waste time during analysis. According to How To Sheets, clean data starts with a clear definition of what counts as a duplicate: an exact match, exact match within a subset of columns, or duplicates spread across several fields. This section unpacks what you should consider as duplicates in your own datasets and sets the stage for practical detection and remediation steps.

Understanding the scope of duplicates helps you choose the right tool for each situation—whether you’re de-duplicating a single column, a multi-column table, or a full sheet. Think about how your downstream analyses (filters, charts, and pivot tables) will react to duplicates if left in place, and decide whether you need a soft flag, a hard removal, or a transformed view of the data.

Why duplicates matter in data

Duplicates are more than cosmetic issues; they can seriously affect decision-making. When the same record appears multiple times, totals become inflated, averages shift, and trend lines misrepresent reality. In reporting environments, duplicated rows can cause double-counting in summaries, leading to misguided conclusions. For teams using Google Sheets for data entry, project tracking, or inventory management, removing duplicates helps ensure consistency across dashboards and shared views. A deliberate deduplication strategy also reduces the risk that collaborators will unintentionally reintroduce duplicates during data imports or merges. By addressing duplicates early, you preserve data integrity, speed up analysis, and improve trust in your numbers.

Common duplication scenarios

Duplicates show up in several common scenarios. You might import data from another system that includes repeated records, copy-paste data into an existing sheet, or append new rows without validating the hash or key. Sometimes duplicates are not exact text matches but are semantically identical (e.g., two variants of the same product name with trailing spaces). They can appear in a single column, across adjacent columns (where the same entity is captured in multiple fields), or across multiple sheets in a workbook. Recognizing these patterns helps you tailor deduplication rules—for example, deduplicating by a single key column versus deduplicating by a composite key derived from multiple fields.

Detection methods in Google Sheets

Detecting duplicates can be done with built-in features or formulas. The most accessible approach is conditional formatting to highlight duplicates so you can review them visually. Use the built-in feature: Format > Conditional formatting, apply a rule like “Custom formula is” with =COUNTIF(A:A, A1) > 1 for a single column. For formula-driven detection, COUNTIF and COUNTIFS are powerful: =COUNTIF(A:A, A1) > 1 flags duplicates in column A; =COUNTIFS(A:A, A1, B:B, B1) > 1 flags duplicates when considering two columns together. You can also build a unique view with =UNIQUE(range) to extract distinct rows or use =FILTER(range, COUNTIF(range, range) > 1) to pull only duplicates. For large datasets, consider QUERY to group and identify duplicates efficiently. Finally, cross-sheet duplicates can be detected by combining data from multiple ranges with {range1; range2} and applying the same logic.

Removal techniques: built-in tools

Google Sheets provides a straightforward path to remove duplicates: Data > Data cleanup > Remove duplicates. You can select one or more columns to define the scope, choose whether to consider only the selected columns or the entire row, and then confirm. This operation permanently deletes duplicate rows, so it's wise to create a backup first. If you only want to reduce duplicates but keep one copy, you can first generate a deduplicated view with =UNIQUE(range) and copy-paste the result back as values. For ongoing workflows, you can set up a pseudo-duplicate-removal pipeline using a separate sheet that references a deduplicated dataset via =UNIQUE and then replaces the original data after validation.

Formula-based approaches to identifying duplicates

Formulas give you dynamic control over how duplicates are detected. A simple approach uses COUNTIF to flag duplicates in a helper column: =IF(COUNTIF(A:A, A2) > 1, "duplicate", "unique"). You can then filter or sort by this helper column to review duplicates. For multi-column duplicates, use a concatenated key: =COUNTIFS(A:A, A2, B:B, B2) > 1, or create a combined key with =A2 & "|" & B2 and apply COUNTIF on that key. A more advanced technique uses =QUERY to summarize and identify duplicates, e.g., =QUERY(A:C,

, 1) combined with a group by clause. If you want to keep only the first occurrence, you can use =FILTER(A:C, MATCH(A:A, A:A, 0) = ROW(A:A)) to keep the first match and remove subsequent ones. These formulas stay responsive as data changes and can be copied across columns for consistent dedup logic.

Automating duplicates checks with Apps Script

For teams that rely on repeatable deduplication, Apps Script offers automation. A simple script can scan a range, collect duplicate rows based on a key column, and either highlight them or move them to a separate sheet for review. Example patterns include building a map of key values to their first index and removing all later occurrences, or creating a deduplicated view automatically and syncing it back to the main sheet after a confirmation step. Scripts can be scheduled or triggered by edits, making data hygiene an ongoing process rather than a one-off cleanup. Always test scripts on a copy of your data to avoid unintended loss.

Real-world examples: step-by-step setups

Example 1: Deduplicating a customer list by email. Step 1, highlight duplicates with conditional formatting using =COUNTIF(Emails, Emails1) > 1. Step 2, use a helper column with =IF(COUNTIF(Emails, Emails1) > 1, "dup", "ok"). Step 3, remove duplicates via Data cleanup, choosing the Email column as the key, and click Remove. Example 2: Finding duplicates across Name and DOB. Step 1, create a helper column with =Name & "|" & DOB. Step 2, apply =COUNTIF(A:A, A2) > 1 to flag duplicates. Step 3, pull just the duplicates into a separate sheet with =FILTER(A:C, COUNTIF(A:A, A2) > 1). These workflows demonstrate how to decide between per-column and cross-field deduplication depending on your data model.

Best practices and pitfalls

When dealing with duplicates, plan a back-up strategy and test on a copy first. Always confirm that you are removing the intended duplicates and not affecting legitimate similar records. Document your deduplication rules so teammates understand why certain records were kept or removed. Be mindful of multi-column duplicates by using composite keys, and consider case sensitivity if your data sources vary in capitalization. Finally, automate checks rather than performing manual cleanup in repetitive tasks to minimize human error and save time over the long term.

Authority sources

For readers seeking formal guidance on data quality and duplication management, consult trusted sources such as Data.gov, NIST, and major educational institutions for data handling best practices. These references provide broader context on data quality, governance, and validation that complements practical Google Sheets techniques.

Wrap-up and next steps

To maintain clean data over time, combine the methods above: start with a quick visual audit via conditional formatting, implement formulas for ongoing checks, and consider an Apps Script workflow for automation. By establishing a repeatable deduplication routine, you reduce errors, improve reporting accuracy, and speed up collaboration across teams. As you scale your datasets, the same principles apply, but you’ll lean more on automated checks and robust data pipelines to keep duplicates at bay.

Tools & Materials

- Google account with edit access to the target Sheets(Needed to perform deduplication steps and apply formulas.)

- Backup copy of the dataset(Always keep a safe copy before removing duplicates.)

- Access to Data > Data cleanup tools(Built-in Remove duplicates option.)

- Optional: Apps Script editor access(For automated deduplication workflows.)

Steps

Estimated time: Estimated total time: 25-40 minutes

- 1

Prepare data and backup

Create a backup copy of your sheet and ensure headers are intact. If your data spans multiple columns, decide whether deduplication will rely on a single key column or a composite key across several fields.

Tip: Always work on a duplicate sheet to prevent data loss. - 2

Highlight duplicates with conditional formatting

Select the range, go to Format > Conditional formatting, and apply a custom formula like =COUNTIF(A:A, A1) > 1. This highlights duplicates for quick visual inspection.

Tip: Use a distinct color to make duplicates easy to spot at a glance. - 3

Flag duplicates with a helper column

In a new column, enter =IF(COUNTIF(A:A, A2) > 1, "duplicate", "unique"). Copy down to flag all occurrences. This creates a working reference for removal or review.

Tip: If your data updates, the helper column auto-refreshes. - 4

Remove duplicates using built-in tool

Data > Data cleanup > Remove duplicates. Choose the key columns. Confirm to delete duplicates; you can opt to keep the first occurrence.

Tip: Review results on backup before applying to the original dataset. - 5

Create a deduplicated view with a formula

Use =UNIQUE(range) to create a clean, deduplicated view. Copy-paste as values if you want to replace the original data with the cleaned version.

Tip: UNIQUE creates a dynamic view that updates as you modify data. - 6

Cross-check with a dynamic filter

Use =FILTER(range, COUNTIF(range, range) > 1) to pull all duplicates into a separate sheet for audit. This helps ensure nothing is missed before removal.

Tip: Maintains a log of duplicates for accountability. - 7

Automate checks with Apps Script (optional)

If you routinely deduplicate, write a small script to identify and move duplicates to a review sheet or remove them automatically after confirmation.

Tip: Test scripts on a copy to avoid accidental data loss.

FAQ

What is the best way to detect duplicates in Google Sheets?

The best method depends on the data: for quick visuals, use conditional formatting to highlight duplicates; for dynamic workflows, use COUNTIF or COUNTIFS with a helper column or a deduplicated view via UNIQUE. Multi-column duplicates may require a concatenated key and a COUNTIFS check.

Use conditional formatting for quick checks, or COUNTIF/COUNTIFS for dynamic duplication tracking.

How do I remove duplicates without losing the original data?

Always create a backup before removing duplicates. Use a dedicated deduplicated view with UNIQUE, then copy-paste values back if needed. Alternatively, remove from a copy sheet and replace the original after verification.

Back up first, then deduplicate on a copy and verify before replacing the original.

Can I automatically deduplicate on import?

Yes. Set up a small Apps Script that runs on import to move duplicates to a review sheet or to remove them automatically after confirmation. You can also use formulas to generate a clean view before importing into the primary sheet.

You can automate deduplication during import with a scripted workflow.

What about duplicates across multiple sheets in a workbook?

Use a 3-step approach: bring data into a single helper sheet, apply a composite key, and deduplicate using COUNTIFS across the combined range. Then, push the cleaned data back to each sheet as needed.

Consolidate to review duplicates across sheets, then deduplicate and re-distribute.

Does removing duplicates affect formulas or charts?

If duplicates impact your calculations (e.g., sums or averages), removing them can change results. Recalculate and verify charts after deduplication to ensure you’re not misrepresenting trends.

Deduplication can change results; verify calculations and charts afterward.

Watch Video

The Essentials

- Identify whether duplicates are per-column or cross-field

- Use conditional formatting for quick visual review

- Choose a removal method that preserves a fallback copy

- Leverage formulas for dynamic deduplication and reusable templates