Check for Duplicates in Google Sheets: A Practical Step-by-Step Guide

Learn practical, step-by-step methods to find and manage duplicates in Google Sheets using conditional formatting, formulas, and data extraction techniques for reliable data hygiene.

You will learn practical, repeatable methods to check for duplicates in Google Sheets, from quick visual checks to robust formulas and extraction techniques. This guide covers single-column and multi-column scenarios, explains when to highlight versus list duplicates, and shows how to set up reusable checks. Before you start, ensure you have a data range ready in Google Sheets and permission to edit the file.

What counts as duplicates in Google Sheets

In Google Sheets, a duplicate is any value that appears more than once in the same key column or across multiple key columns used to identify a record. According to How To Sheets, duplicates can be exact matches (identical characters and formatting) or near matches if you decide to normalize data first. The first step is to decide your definition: do you want to highlight exact text matches only, or include numbers treated as strings, leading zeros, or trailing spaces? For robust data cleaning, many teams treat trimmed, case-insensitive comparisons as duplicates. For example, the values Apple and apple should be considered duplicates if your workflow ignores case, while Apple (with a trailing space) should not unless you trim first.

Beyond single-column checks, duplicates often emerge when parallel columns together form a composite key. If a customer is identified by both Account ID and Email, the pair (AccountID, Email) should be treated as the unique key. In practice, you’ll look for both exact duplicates and near-duplicates caused by formatting inconsistencies, extra spaces, or different data types. Having a clear definition saves you from chasing false positives and speeds up cleaning. How To Sheets emphasizes defining the scope early: decide which columns form your key, and whether blanks count as duplicates. If your sheet contains thousands of rows, small inconsistencies become costly; a consistent rule set keeps downstream tasks predictable and auditable.

Quick-start methods to find duplicates

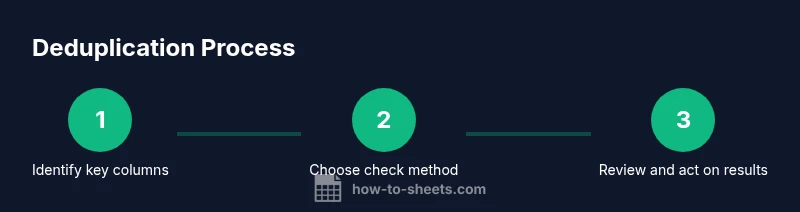

There isn’t a single magic button for every dataset, but there are reliable paths you can follow in Google Sheets. Start with a quick visual check, then choose a method that fits your data size and your goal (highlighting, listing, or removing duplicates). According to How To Sheets, the best approach often combines a fast visual cue with a precise formula or filter, so you can review results before applying changes. For small to medium datasets, conditional formatting and simple COUNTIF formulas cover most use cases. For larger datasets, building a separate duplicates list with FILTER or QUERY can help you audit data without altering the original sheet. If you routinely run de-dup processes, consider creating a template sheet that standardizes ranges, headers, and the definition of duplicates so your team can reuse the workflow consistently.

Method A: Conditional formatting to highlight duplicates

Conditional formatting is one of the quickest ways to spot duplicates without changing your data. The basic idea is to apply a formatting rule that marks cells whose value appears more than once in the chosen range. To implement, select the target column or range (for example A2:A1000). Open Format > Conditional formatting, choose Custom formula is and enter =COUNTIF($A$2:$A$1000, A2)>1. Pick a bold color that stands out, such as yellow or red, and apply. If you’re checking multiple columns for a composite key, you can extend the check by applying the rule to adjacent columns and using a combined reference (for example, =COUNTIFS($A$2:$A$1000, A2, $B$2:$B$1000, B2)>1). Pro tip: set the Applies to range to entire rows if you want the whole line highlighted when a duplicate is found. After applying, review the highlighted rows and decide whether to keep, merge, or remove duplicates. For large sheets, limit the range to actual data to maintain performance; full-column checks can slow down recalculation in real-time.

Method B: Using formulas to identify duplicates

Formulas give you a precise, auditable way to mark or extract duplicates. A common pattern for a single column is: =IF(COUNTIF($A$2:$A$1000, A2)>1, duplicate, unique). This returns a label you can filter or sort on. For a two-column key, use COUNTIFS to test the composite key: =IF(COUNTIFS($A$2:$A$1000, A2, $B$2:$B$1000, B2)>1, duplicate, unique). If you want a dynamic list of duplicates, wrap the test in an IF to output blanks where there’s no duplication, or use FILTER/INDEX to pull duplicates: =FILTER(A2:A1000, COUNTIF(A2:A1000, A2:A1000)>1). For multi-column duplicates, the pattern is =FILTER(A2:C1000, COUNTIFS(A2:A1000, A2:A1000, B2:B1000, B2:B1000, C2:C1000, C2:C1000)>1). To improve reliability, trim spaces and normalize case before testing: =IF(COUNTIF($A$2:$A$1000, LOWER(TRIM(A2)))>1, duplicate, unique). Pro tip: document your ranges as named ranges to keep formulas readable and portable.

Method C: Extracting duplicates to a separate list

Sometimes you want to isolate duplicates without editing the original data. Use FILTER to create a live list of duplicates, then optionally wrap with UNIQUE to remove repeated duplicates in the extracted list. Example for a single column: =FILTER(A2:A1000, COUNTIF(A2:A1000, A2:A1000)>1). If you need the duplicates alongside a second column (a composite key), use: =FILTER(A2:B1000, COUNTIFS(A2:A1000, A2:A1000, B2:B1000, B2:B1000)>1). To present a clean, de-duplicated set of duplicates, apply UNIQUE on the filtered results: =UNIQUE(FILTER(A2:A1000, COUNTIF(A2:A1000, A2:A1000)>1)). Pro tip: add headers to the output sheet and use data validation to prevent accidental overwrites. For large datasets, consider using the SORT function to order duplicates by column, or the QUERY function for more complex criteria.

Practical tips for large datasets and performance

As data size grows, performance becomes a real concern. Use explicit ranges (A2:A100000) instead of whole-column references when possible, and prefer named ranges for clarity. If you’re using COUNTIF on a very large range, switch to COUNTIFS with an explicit composite key to avoid unnecessary recalculation. When applying conditional formatting across many columns, limit the scope to visible data and consider using custom formulas that reference a helper column that flags duplicates, rather than duplicating checks across many formats. Periodically clean your data by trimming spaces, standardizing case, and removing non-printable characters before running dedup checks. If your sheet is shared, document the exact steps you’ll take, so collaborators can reproduce the results. Finally, create a backup copy before performing bulk deletions or structural changes, so you can roll back if you misidentify a non-duplicate as a duplicate.

Common mistakes and how to avoid them

The most common errors happen when people skip normalization or misinterpret blank cells. Always run a TRIM and, if needed, a LOWER transformation before testing for duplicates. Be careful with leading zeros numbers stored as text can appear unique when they aren’t. For multi-column checks, ensure you test the complete key (for example, both ID and email) rather than scanning one column alone. Don’t forget about empty cells; decide in advance whether blanks count as duplicates in your workflow. If you’re sharing the sheet, explain the rules in a data dedup paragraph in the header and keep a changelog. Finally, test your rules on a small subset of data first to validate behavior before applying to the entire dataset.

Tools & Materials

- Google Sheets access(Open a sheet where duplicates will be checked and edited)

- Sample dataset(Have a data range ready (CSV or existing sheet))

- Backup copy(Create a separate copy before bulk edits or deletions)

- Formula cheat sheet(Keep a reference for COUNTIF, COUNTIFS, FILTER, and UNIQUE)

Steps

Estimated time: 30-60 minutes

- 1

Identify target range and key columns

Decide which column or set of columns form the unique key for duplicates. Note the data range and headers. This establishes the scope for all subsequent checks.

Tip: Use named ranges to keep references stable as data grows - 2

Choose a primary method

Pick either conditional formatting for quick visual checks or formulas for auditable results. For large datasets, plan a separate duplicates list to avoid altering the original data.

Tip: If unsure, start with conditional formatting to gauge the scope - 3

Apply a duplicate test formula

Enter a COUNTIF or COUNTIFS formula to mark duplicates. If using a composite key, combine criteria to test the full key.

Tip: Normalize data first with TRIM and LOWER to reduce false positives - 4

Review results and adjust rules

Check a sample of flagged duplicates to confirm accuracy. Adjust ranges or definitions if needed before large-scale actions.

Tip: Filter by the duplicate label to focus review - 5

Extract or remove duplicates

Use FILTER or UNIQUE to pull duplicates into a new sheet, or use built-in remove duplicates with care. Always keep a backup.

Tip: Document removal rules to ensure reproducibility - 6

Document the workflow

Create a short methodology note in the sheet header describing the checks, rules, and when data was deduplicated.

Tip: Share the note with collaborators to avoid confusion

FAQ

What counts as a duplicate in Google Sheets?

A duplicate appears when a value repeats in the chosen key columns. Decide if blanks, case, and spaces count, and whether to normalize data before testing.

A duplicate is when a value repeats in the key columns. Decide your rules about blanks and case before testing.

How can I highlight duplicates across multiple columns?

Use COUNTIFS to test the full composite key across the relevant columns, and apply conditional formatting with a custom formula that references all key columns.

Use a COUNTIFS formula to test the whole key across columns, then apply conditional formatting.

What is the difference between highlighting duplicates and extracting them?

Highlighting provides a visual cue in place; extracting creates a separate list or sheet of duplicates for review or downstream processing.

Highlighting shows duplicates on the sheet; extracting moves them to another list for review.

Can I automate duplicate checks as new data comes in?

Yes. Use dynamic ranges, named ranges, and non-volatile formulas to recalculate as data grows. For heavy workflows, consider Apps Script automation.

Yes, you can automate checks with dynamic ranges and optional scripting for more complex tasks.

How do I remove duplicates safely?

Create a backup, use a test subset, then apply a remove duplicates operation or filter out non-unique entries. Document the criteria used.

Back up first, test on a sample, then remove duplicates and document criteria.

Watch Video

The Essentials

- Define duplicates clearly before checking

- Use a mix of formatting and formulas

- Test on a small data subset first

- Back up data before bulk changes