Google Sheets Check for Duplicates: A Step-by-Step Guide

Learn to identify and remove duplicates in Google Sheets with a practical, step-by-step approach. This How To Sheets article covers core formulas, conditional formatting, and lightweight automation for clean data.

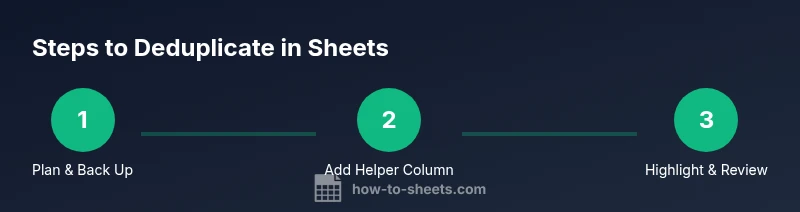

I will teach you a google sheets check for duplicates and how to prevent data quality issues. This guide covers built-in formulas, conditional formatting, and simple scripts. According to How To Sheets, a quick three-step approach—identify, verify, and isolate duplicates—lets you keep datasets accurate and reliable. Keep a backup, test on a sample, and document your criteria for future audits.

What duplicates are in Google Sheets and why they matter

In Google Sheets, a duplicate is a row where the value in a key column matches another row's value, potentially across multiple columns if you define a composite key. Duplicates can creep in during data collection, imports, or collaborative edits, and they often distort counts, skew averages, and misrepresent customer lists. From a data-quality perspective, duplicates waste time during analysis and can lead to wrong business decisions if not caught early.

As the How To Sheets team notes, removing duplicates is not just about trimming numbers; it's about preserving the integrity of the dataset. A duplicate might be legitimate in some contexts but harmful in others (for example, a customer email repeated in a contact list is usually not desired unless it represents multiple orders). Start by defining what constitutes a duplicate for your task: the critical fields that must be unique, such as an ID, email, or composite key of several columns. Once you have your criteria, you can design checks that are precise, reproducible, and auditable.

In this section we will outline practical strategies that work across many datasets: simple visual checks, formula-based checks, and automated methods for ongoing data entry. The goal is not only to identify duplicates but also to create a workflow that prevents them from slipping into your sheets in the future.

According to How To Sheets analysis, data hygiene is foundational to reliable analysis, especially in collaborative environments where multiple users edit data.

Quick methods to detect duplicates in Google Sheets

There are several ways to identify duplicates in Sheets, ranging from quick visual cues to robust formula-driven checks. For smaller datasets, conditional formatting is often enough: you can highlight duplicate values in a column with a color rule, making duplicates obvious at a glance. For larger datasets or multi-column keys, formulas provide a scalable solution. The COUNTIF function is a popular, straightforward option: in a helper column, enter =COUNTIF($A$2:$A$1000, A2) > 1 to flag any value that appears more than once. If you need a two-column key, use COUNTIFS to count matches across both columns, or combine CONCATENATE/TEXTJOIN to create a single composite key.

A typical workflow looks like this: (1) create a helper column to mark duplicates, (2) optionally keep a separate “Original” sheet with non-duplicates, (3) use conditional formatting to highlight flagged rows, and (4) review flagged items for action. For extra protection, you can use the UNIQUE function to extract a list of unique rows, or FILTER to display only duplicates. When you use functions, prefer absolute references for ranges and test on a copy of your data to avoid accidental loss. How To Sheets also emphasizes documenting your criteria and maintaining a change log for audits and future reviews.

In practice, most teams start with a single-column COUNTIF check, then graduate to multi-column keys and non-destructive workflows. This tiered approach reduces risk while preserving data integrity for downstream reporting.

Step-by-step workflow: identify, verify, and address duplicates

A solid deduplication workflow balances speed and accuracy. Begin by identifying duplicates using a simple rule set, then verify the findings against real-world criteria, and finally decide whether to remove, Tag, or separate duplicates for further investigation. You should always preserve the original data until you’re confident in your results.

- Define the key fields that determine a duplicate (for example, Email and Order ID). 2) Create a helper column with a COUNTIF/COUNTIFS formula to flag potential duplicates. 3) Apply conditional formatting to visually highlight duplicates across the relevant range. 4) Use UNIQUE or FILTER to validate that duplicates are correctly identified and to generate a clean, deduplicated view. 5) If automation is desired, consider a lightweight Apps Script to tag or move duplicates to a separate sheet rather than deleting them.

Best practice is to test steps on a copy of your dataset before applying them to live workbooks. Document the rules you used and the actions you took so audits and future reviews are straightforward.

Advanced methods: Apps Script, array formulas, and the UNIQUE function

For larger workbooks or ongoing data entry, options beyond basic formulas can save time and reduce manual steps. Apps Script can automatically scan new rows on edit, flag duplicates, or even move duplicates to a dedicated sheet. You can implement a simple onEdit trigger that checks the newly added row against existing data and writes a flag beside duplicates. Array formulas like =IFERROR(MATCH( A2:A, A2:A, 0 ), "") can detect duplicates without dragging formulas down, though they require careful anchoring and an understanding of array behavior. The UNIQUE function is invaluable for generating a clean list of unique rows from a dataset and can be combined with FILTER to produce a deduplicated view for reporting. When combining these methods, keep performance in mind and test incrementally to avoid slowing down very large sheets.

Tips for robust implementations include normalizing text with LOWER() and TRIM(), handling blanks explicitly, and maintaining versioned backups. The non-destructive approach—tagging or moving duplicates rather than deleting them—helps protect against accidental data loss and makes it easier to revert changes if needed.

Handling duplicates in large datasets and shared sheets

As spreadsheets grow, the cost of naive duplicate checks rises. In large datasets, rely on a combination of formulas, filters, and batch operations to avoid lag and timeouts. Use a separate sheet or a data range with a defined boundary (e.g., A2:A100000) to run heavy checks, and consider splitting data into multiple sheets or using the IMPORTRANGE function to consolidate duplicates from linked sources in a controlled fashion. When a dataset is shared, communicate clearly about deduplication rules and ensure that all collaborators can see the same criteria. Consider adding a guard column that records the date and user who flagged or moved a duplicate, which helps trace changes during reviews.

Additionally, always back up before applying bulk removals. In many real-world scenarios, duplicates indicate legitimate repeated records (e.g., repeated customer visits or repeat orders). Treat each case based on the defined key and the specific business rules.

Common pitfalls and best practices

Common pitfalls include treating all columns as part of a composite key without clear justification, which can label too many records as duplicates. Another pitfall is using one-off formulas in isolation and failing to update ranges when data grows. To avoid these issues, design a small, repeatable workflow that you can re-run when data changes, and use named ranges for clarity. Always maintain a backup copy before removing data and document your criteria so audits remain reproducible. When in doubt, test on a sample batch first and gradually scale up. Also consider whether duplicates should be flagged or moved rather than deleted, preserving an auditable trail of actions.

Finally, remember that no single method fits every dataset. A layered approach—visual checks, simple formulas, and optional automation—offers the most reliable path to clean, trustworthy sheets.

Ready-to-use templates and examples

The best way to learn is by example. Start with a small template that includes a single-column duplicate check, then expand to a multi-column composite key. Create a helper column with a COUNTIF formula, add a conditional formatting rule to highlight duplicates, and build a second sheet that lists unique records via UNQIE or FILTER functions. Save these templates in your workbook as a starting point for similar projects. For ongoing projects, maintain a changelog that logs when duplicates were found, how they were addressed, and who approved the final results. By building a library of templates and documented workflows, you can scale deduplication across multiple teams and datasets.

A practical approach is to keep a “Before” and “After” snapshot side-by-side during the deduplication process to ensure you can verify results and revert if necessary. Over time, you’ll develop a mental map of which fields are most prone to duplicates and which techniques strike the best balance between speed and accuracy.

AUTHORITY SOURCES

- https://www.nist.gov

- https://hbr.org

- https://support.google.com/docs/answer/139706

Tools & Materials

- Google account with access to Google Sheets(Ensure you have edit rights to the target spreadsheet)

- Sample dataset with duplicates(Create a copy to test deduplication workflows)

- Backup storage(Local drive or cloud backup for safety)

- A second sheet for deduplicated results(Optional practice to separate original and cleaned data)

- Apps Script editor (optional)(For lightweight automation tasks)

Steps

Estimated time: 45-75 minutes

- 1

Open the dataset

Open the target spreadsheet and identify the key columns that define duplicates (for example, Email or a composite key of multiple fields). This step sets the scope for your checks and reduces ambiguity when you act on the data.

Tip: Document the chosen key fields before proceeding. - 2

Add a helper column with COUNTIF

Insert a new column and use a COUNTIF/COUNTIFS formula to flag duplicates, such as =COUNTIF($A$2:$A$1000, A2) > 1 for a single-column check or =COUNTIFS($A$2:$A$1000, A2, $B$2:$B$1000, B2) > 1 for a composite key.

Tip: Anchor ranges with $ to ensure correct replication down the column. - 3

Apply conditional formatting

Highlight duplicates with a rule like 'Format only cells that contain: Custom formula is =COUNTIF($A$2:$A$1000, A2) > 1' and choose a distinct color. This provides a quick visual cue for reviewers.

Tip: Use non-destructive formatting to preserve data integrity. - 4

Create a unique view for verification

Use the UNIQUE function or FILTER to generate a deduplicated view that you can compare against the original data. This helps validate that your flags align with actual duplicates.

Tip: Test with a small sample before applying widely. - 5

Automate or semi-automate as needed

If duplicates are a recurring issue, consider a lightweight Apps Script or a scheduled query that flags or moves duplicates to a separate sheet, rather than deleting them from the main dataset.

Tip: Document automation choices and provide a rollback option.

FAQ

What is considered a duplicate in Google Sheets?

A duplicate is a row where the key fields match another row. Depending on your criteria, duplicates can be defined by a single column or a combination of columns forming a composite key.

Dups happen when key fields match another row; it can be a single column or a combination of columns.

Should I use a helper column or a separate sheet for deduplication?

A helper column is quick for flagging duplicates directly in the dataset, while a separate sheet provides a clean deduplicated view for reporting. Both approaches are valid depending on the workflow.

Flag duplicates with a helper column for quick checks, or create a separate sheet for a clean view.

Is there a one-click dedup tool in Sheets?

There isn't a universal one-click dedupe feature, but you can achieve deduplication with a combination of COUNTIF/COUNTIFS, UNIQUE, and conditional formatting, or via a small Apps Script for automation.

No single button does it all; combine formulas and optional scripts for automation.

How can I deduplicate across multiple columns?

Create a composite key by concatenating the columns (or use COUNTIFS on multiple columns) to identify duplicates across a multi-column key.

Use a composite key or COUNTIFS across the needed columns.

Can duplicates be safely removed in a shared workbook?

Yes, but only after confirming the criteria and ensuring others understand the rules. Make a backup and consider tagging duplicates instead of deleting.

Yes, but back up first and ensure a clear rule for removal.

How often should I check for duplicates in dynamic datasets?

Check regularly as data is imported or edited, and implement an automated check if possible to catch duplicates early.

Check regularly, especially after imports, and automate if you can.

Watch Video

The Essentials

- Define a clear duplicate key before checking.

- Prefer non-destructive methods—tag or move duplicates rather than delete.

- Document criteria and maintain a change log for audits.

- How To Sheets team recommends a reproducible dedup workflow to save time.