Identifying duplicates in Google Sheets: A Practical Guide

Learn practical, step-by-step methods to identify duplicates in Google Sheets, using COUNTIF, conditional formatting, and filters to clean data efficiently.

In Google Sheets, identifying duplicates is a common data-cleaning task. You can spot exact duplicates with COUNTIF formulas, highlight them with conditional formatting, and surface them with FILTER or UNIQUE. This quick approach gives you a reliable, repeatable workflow to flag, review, and decide how to handle duplicates across rows or columns.

Understanding identifying duplicates in google sheets

Identifying duplicates in google sheets is a foundational data-cleaning skill. Whether you manage student rosters, sales leads, or inventory lists, duplicates can distort summaries, skew analytics, and confuse decision-making. The core idea is to detect values that appear more than once within a single column, across columns, or across rows, depending on your data structure. Start by clarifying what counts as a duplicate in your context: exact text matches, numeric equivalence, or case-insensitive similarity. In practice, you’ll often normalize data first — trim spaces, standardize capitalization, and remove non-printing characters — so that duplicates aren’t hidden by formatting quirks. With Google Sheets, you have several reliable techniques: formulas like COUNTIF, built-in tools like Remove duplicates, and visual cues through conditional formatting. You’ll choose a method based on your dataset size, whether you need to flag duplicates for review, or you want to extract just the duplicates for further inspection. This article walks you through practical, step-by-step methods for identifying duplicates in google sheets.

Why duplicates matter in google sheets

Duplicates in Google Sheets can subtly distort reports, inflating totals, misrepresenting unique records, and complicating data validation. When your dataset contains repeated entries for customers, products, or transactions, pivot tables and summary charts may double-count. The impact is not just numeric; it can erode trust in your analysis and lead to poor business decisions. The goal of identifying duplicates in google sheets is to create a clean baseline that you can rely on for forecasting, budgeting, and performance tracking. By standardizing data entry practices and applying repeatable detection methods, you reduce manual cleanup time and improve data integrity across projects.

Quick techniques overview for duplicates

There are four core approaches you’ll encounter when identifying duplicates in google sheets: 1) COUNTIF and COUNTIFS for exact matches, 2) conditional formatting for visual flagging, 3) FILTER and UNIQUE to extract and list duplicates, and 4) data normalization steps to reduce false positives. COUNTIF checks how many times a value appears in a range, making it easy to label duplicates. CONDITIONAL FORMATTING provides immediate visual cues without changing data. FILTER combined with UNIQUE can create a separate view of duplicates for review. Together, these techniques cover most common data-cleaning scenarios in Google Sheets and scale from small lists to multi-column tables.

Method 1: COUNTIF for a single column

To detect duplicates in a single column, apply COUNTIF to identify values that occur more than once. For example, in B2 write =IF(COUNTIF($A$2:$A$100, A2)>1, "Duplicate", "Unique"). Copy down the formula to the rest of the column. This flags each occurrence, enabling quick review. If you only want a list of duplicated values, wrap the output with a FILTER to pull unique duplicates: =UNIQUE(FILTER(A2:A100, COUNTIF(A2:A100, A2:A100)>1)).

TIP: Use absolute references for the range to keep the rule stable when you sort or filter.

Method 2: COUNTIF with multiple criteria across columns

When duplicates depend on more than one field (e.g., the same name in column A and same date in column B), use COUNTIFS. For example, in C2 you could enter =IF(COUNTIFS($A$2:$A$100, A2, $B$2:$B$100, B2)>1, "Duplicate", "Unique"). This flags row pairs that share the same combination across columns. You can then filter or copy those rows for review.

TIP: Ensure all relevant columns are included in the criteria to prevent false negatives.

Method 3: Using conditional formatting to highlight duplicates

Conditional formatting provides a non-destructive, visual method to spot duplicates as you work. Select the range (A2:A100), then go to Format > Conditional formatting. Use a custom formula such as =COUNTIF($A$2:$A$100, $A2)>1 and choose a distinct fill color. For multi-column duplicates, apply a formula like =COUNTIFS($A$2:$A$100, $A2, $B$2:$B$100, $B2)>1. This highlights every duplicate row/entry.

TIP: Apply the rule to the entire relevant range to keep the view consistent when you add new data.

Method 4: Using UNIQUE and FILTER to surface duplicates

To surface duplicates for review, combine FILTER with COUNTIF. For a single column, use =FILTER(A2:A100, COUNTIF(A2:A100, A2:A100)>1). To show only the unique duplicated values, wrap with UNIQUE: =UNIQUE(FILTER(A2:A100, COUNTIF(A2:A100, A2:A100)>1)). For multi-column duplicates, adapt the approach with COUNTIFS.

TIP: Sort the result to group identical duplicates together, making review more efficient.

Clean-up strategies after identifying duplicates

After identifying duplicates, decide whether to delete, consolidate, or flag duplicates for review. If your objective is a clean master list, remove extra occurrences while preserving a single authoritative record. For records that require historical context, consider consolidating data into a single row and annotating the source. Always back up the dataset before bulk deletions, and validate the results by spot-checking after changes.

TIP: Maintain a log of changes or create a versioned copy of your sheet before performing any deletions.

Common pitfalls and best practices

Be mindful of common pitfalls such as leading/trailing spaces, mixed data types (numbers stored as text), and inconsistent capitalization. Normalize data first: TRIM, UPPER/LOWER, and remove non-printing characters. When working across large ranges, performance can degrade with complex formulas; prefer using helper columns or batch operations. Establish consistent data-entry rules to minimize duplicates at the source.

NOTE: If your data connects to external sources, duplicates may reappear after refresh; re-check rules after updates.

Case studies and real-world templates

In practical scenarios, teams frequently start with a copy of the production sheet, run a COUNTIF-based detector on a key identifier (like email or order number), then iterate across related columns to catch multi-field duplicates. A common template includes a helper column that flags duplicates, a filtered view that shows only duplicates, and a separate sheet for review notes. For students or small businesses, a lightweight template with conditional formatting and a simple duplicate extractor often suffices to keep data clean with minimal overhead.

Tools & Materials

- Computer or device with internet access(Any modern browser (Chrome recommended) with Google Sheets access.)

- Google account(Must have permission to edit the target Google Sheet.)

- Backup copy of dataset(Create a local or cloud backup before bulk deletions or structural changes.)

- Sample dataset or export file (CSV/Excel)(Helps practice detection on representative data.)

- Formula reference sheet(Keep a cheatsheet of COUNTIF/COUNTIFS syntax and conditional formatting rules.)

Steps

Estimated time: 20-40 minutes

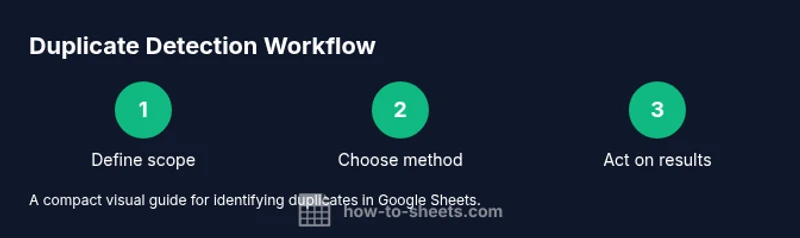

- 1

Open dataset and define scope

Open the Google Sheet containing the data and identify the exact columns or ranges to check for duplicates. Decide whether duplicates will be detected within a single column, across multiple columns, or across rows.

Tip: Document the scope to avoid applying rules to unrelated data. - 2

Choose a detection method

Decide whether COUNTIF, COUNTIFS, or conditional formatting best fits your needs based on the data structure and whether you need to flag, extract, or visually highlight duplicates.

Tip: Starting with COUNTIF is often simplest for single-column checks. - 3

Apply COUNTIF for single-column duplicates

Enter a COUNTIF formula in a helper column (e.g., B2: =IF(COUNTIF($A$2:$A$100, $A2)>1, "Duplicate", "Unique"). Copy down to cover the range.

Tip: Lock the range with $ to keep it stable when you scroll or sort. - 4

Set up conditional formatting

Apply a conditional formatting rule to the target range to visually highlight duplicates using a formula like =COUNTIF($A$2:$A$100, $A2)>1.

Tip: Use a distinct color and test on a small sample first. - 5

Extract duplicates for review

Use FILTER or a dedicated duplicate column to extract only the duplicate rows for a review list (e.g., =FILTER(A2:A100, COUNTIF(A2:A100, A2:A100)>1)).

Tip: Sort the extracted list to group identical duplicates together. - 6

Review and decide on action

Review flagged duplicates and decide whether to remove, consolidate, or annotate. If removing, do it in a copy of the sheet first to validate results.

Tip: Maintain a change log for traceability.

FAQ

What is considered a duplicate in Google Sheets?

A duplicate is a value that appears more than once in the specified scope (single column, across columns, or within rows). It can be exact text, a numeric value, or a combination of fields depending on your criteria.

A duplicate is a value that appears more than once in the chosen scope, based on the criteria you set.

How do I find duplicates across multiple columns?

Use COUNTIFS to define multiple criteria across columns (for example, same name in column A and same date in column B). This flags rows that share the same combination across the selected fields.

Use COUNTIFS to specify multiple criteria across the columns to find duplicates.

Can I identify near duplicates or partial matches?

Google Sheets does not have built-in fuzzy matching; you can approximate with text functions (LIKE, REGEXMATCH) or by normalizing data and comparing cleaned values. For precise matches, rely on exact text comparisons.

Near duplicates aren’t detected by default; you’d need custom text operations and normalization for approximation.

What should I do after identifying duplicates?

Decide to delete, consolidate, or annotate duplicates. Create a backup, test the action on a sample, and keep a log of changes to maintain data integrity.

Back up data, test changes on a sample, and keep a log of what you changed.

How can I prevent duplicates in the future?

Enforce data-entry rules, use data validation, and establish standardized formats. Regularly run duplicate checks as part of data hygiene routines.

Set up validation and recurring checks to catch duplicates early.

Does performance get affected with large datasets?

Large sheets can slow down with complex formulas. Use helper columns, limit ranges, and consider breaking data into multiple sheets to maintain responsiveness.

Yes, performance can degrade with big datasets; optimize range usage.

Watch Video

The Essentials

- Identify the scope before acting

- Use COUNTIF/COUNTIFS for precise duplicates

- Visual cues speed up review with conditional formatting

- Back up data prior to deletions

- Validate results with small tests before scaling