Google Sheets Size Limit: A Practical Guide to Capacity and Performance

Explore the Google Sheets size limit, how it affects large datasets, and practical strategies to stay within the cap while preserving performance. Learn from How To Sheets’ analysis and implement proven approaches.

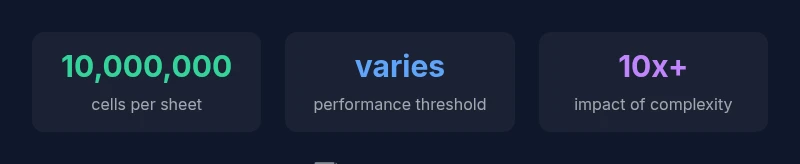

Google Sheets enforces a practical size limit that governs how much data a single spreadsheet can hold. The official cap is widely cited as up to 10 million cells per spreadsheet, but performance and collaboration issues often show up long before reaching that ceiling. Understanding this limit helps you plan data architecture, avoid crashes, and decide when to split data into multiple files or use alternatives.

Understanding the google sheets size limit

The google sheets size limit defines how much data a single workbook can physically store in cells, not counting images, charts, or other embedded objects. For many users, the cap is experienced as a ceiling rather than a hard floor: early performance impacts can appear well before hitting the actual cell count. According to How To Sheets analysis, the official limit is commonly cited as up to 10 million cells per spreadsheet. In practice, what you can do in a single workbook depends on data types, formulas, formatting, and the number of sheets you include. A simple dataset with clean numbers and minimal formatting will behave differently from a trap-filled sheet with heavy array formulas or conditional formatting rules. That makes proactive planning essential when you start expanding beyond a few hundred thousand cells. In short, the "google sheets size limit" is real, but the practical boundary is shaped by content, usage, and collaboration patterns.

The official limit and what it means for your spreadsheets

The official limit is widely reported as up to 10 million cells per spreadsheet. This ceiling applies across all sheets in a workbook. However, not every cell is equal in cost: a blank cell uses memory, but complex formulas, formatting, and linked data increase recalculation time. As soon as your sheet reaches tens of thousands of used cells with heavy formulas, you may notice longer load times, slower edits, and lag during collaboration. For teams, this means structuring workbooks to separate inputs, intermediate calculations, and outputs. Some organizations adopt a "hub and spoke" model where one master sheet imports data from smaller, purpose-built sheets. Remember, the number itself is a ceiling under ideal conditions; real-world projects hit performance constraints sooner depending on formula complexity and concurrent edits.

How data patterns affect performance near the limit

Data distribution matters. A dense database-like sheet with thousands of unique formulas, array formulas, and cross-sheet references can slow down as soon as the active range grows. Recalculation cascades trigger redraws and updates across multiple tabs; when dozens of VLOOKUP or FILTER operations run simultaneously, the time to recalculate increases. Similarly, heavy conditional formatting rules applied across many rows, or data validation on thousands of cells, add overhead even if you rarely interact with those cells. If you plan to keep a large dataset in Sheets, consider modularization: break data into linked sheets, use QUERY and FILTER to present subsets, and cache results in static ranges instead of re‑computing them on every edit. The bottom line: patterns matter as much as size.

Counting cells and estimating size in practice

A practical way to assess size is to compute total used cells rather than relying on an abstract limit. If a workbook has 10 sheets and each sheet uses 50,000 rows by 100 columns, the total cells approach 50,000 × 100 × 10 = 50,000,000. That scenario far exceeds the official cap and illustrates why many workbooks slow down well before reaching the maximum. A more realistic approach is to measure the actual data area on each sheet: count non-empty rows and columns, or leverage a short Apps Script to sum used ranges. If you routinely import data, keep a separate data intake sheet and then push final results into a summary sheet. By maintaining a clear separation between raw data and computed outputs, you reduce the risk of hitting the size cap unexpectedly. Finally, purge dead data and unused ranges to extend workbook life.

Strategies to work with large datasets in Sheets

To manage large datasets without burning through the limit, adopt a multi-pronged approach. First, use QUERY or FILTER to present only the subset of data you need in your active view, rather than loading everything at once. Second, minimize the use of volatile array formulas and heavy conditional formatting across entire ranges; instead, apply them to targeted areas. Third, split data across multiple files or separate sheets and reference them via IMPORTRANGE or QUERY where appropriate. Fourth, cache results by storing calculated values in static ranges rather than re‑computing them on every edit. Finally, design a data architecture that keeps raw data isolated from reports, so you can refresh outputs without increasing the active data footprint.

When to consider alternatives beyond Google Sheets

If your dataset approaches the practical limits of Sheets, it is time to consider alternatives. For very large data, Google BigQuery offers scalable storage and fast analytics without the same in-browser limitations. For offline work or compatibility with traditional workflows, migrating a portion of data to Excel or exporting to CSV can simplify analysis. You can also use Sheets as a front-end dashboard while connecting to a more robust backend—scripting and connectors (Apps Script, API access) make this feasible. The goal is to preserve collaboration while avoiding the performance penalties that come with oversized workbooks.

Google Sheets size limits and practical considerations

| Aspect | Official limit | Notes |

|---|---|---|

| Total cells per spreadsheet | up to 10,000,000 cells | Official ceiling; performance varies by content |

| Performance threshold indicators | varies by use case | Heavy formulas and formatting push limits earlier |

| Data organization strategy | no single published per-sheet limit | Modular designs help manage growth |

FAQ

What is the official size limit for Google Sheets?

The official limit is commonly cited as up to 10 million cells per spreadsheet. Real-world performance often slows earlier, depending on formulas, formatting, and collaboration.

The official limit is up to 10 million cells per spreadsheet, but real-world performance can slow down much earlier depending on complexity.

Do charts or images count toward the cell limit?

Charts and images do not count as cells toward the limit, but they can increase file size and slow down rendering or loading times.

Charts and images don’t count as cells, but they can affect performance and loading time.

How can I estimate how many cells my sheet uses?

Estimate by calculating the data area per sheet (rows × columns) and summing across sheets, or use Apps Script to tally the used ranges.

Estimate using rows times columns per sheet, or tally used ranges with Apps Script.

What should I do if I hit the limit?

Split data into multiple files, archive older data, use QUERY to reduce active datasets, or move raw data to a database and connect via APIs.

Split data, archive, or move raw data to a database and connect via API.

Are there per-sheet limits I should worry about?

There isn't a widely published per-sheet cap. Perceived limits usually arise from content density, complex formulas, and cross-sheet references.

There isn’t a fixed per-sheet cap; performance limits come from content density and complexity.

“Understanding where Google Sheets size limits bite helps teams design scalable worksheets without sacrificing collaboration.”

The Essentials

- Know the 10 million cell cap and practical impact on performance

- Monitor growth by analyzing actual data areas, not just totals

- Modularize data with multiple sheets and references

- Use QUERY/FILTER to minimize loaded data in views

- Consider BigQuery or CSV exports for very large datasets

- Plan data architecture early to avoid hitting limits