Google Sheets to BigQuery: A Practical Data Pipeline Guide

Learn how to move data from Google Sheets to BigQuery with step-by-step methods, practical tips, and safeguards for reliable data analysis and scalable reporting.

You will connect a Google Sheet to BigQuery to query or copy data into a native BigQuery table. The easiest approach uses a Google Sheets external table in BigQuery and optionally a scripted data push. Prerequisites include Sheet access and BigQuery project permissions.

Why the integration matters for data analysis

When teams rely on Google Sheets for data collection, analysts often hit BigQuery's limits for large datasets, complex joins, and long-running queries. Linking Sheets to BigQuery allows you to run scalable analyses, join sheet data with other datasets, and produce robust dashboards. In the context of google sheets to bigquery, you get the best of both worlds: the ease of Sheets for data entry and the power of BigQuery for analytics, all without manually exporting files each week. According to How To Sheets, this bridge saves time on recurring reports and reduces data silos across teams.

Approaches to connect Sheets with BigQuery

There are several practical approaches to move or reference Sheets data in BigQuery. The simplest is to create an external table that points to your Google Sheet, then query it or materialize a native BigQuery table from it. Another option is to push new rows into BigQuery from Sheets using Apps Script or the BigQuery API, which is ideal for real-time or near-real-time workflows. A third approach is to export Sheets as CSV and load that file into Google Cloud Storage before loading into BigQuery. Each method has tradeoffs in latency, freshness, and maintenance. This guide emphasizes actionable, low-friction paths suitable for students, professionals, and small business owners.

Prerequisites and access requirements

Before you begin, ensure you have a Google Cloud project with BigQuery enabled and a Google Sheet ready for integration. The Sheet must be accessible to the service account or user performing the integration. You should also have sufficient permissions in BigQuery to create datasets, external tables, and to run data definition language (DDL) statements. Finally, enable the Google Sheets integration in your GCP environment or use Apps Script with the BigQuery Advanced Service for programmatic access.

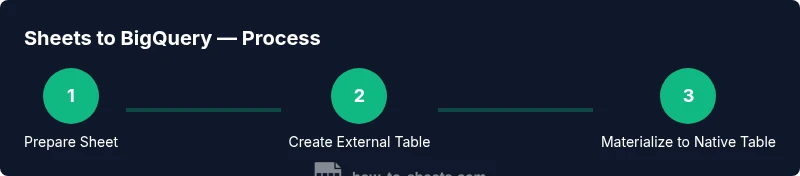

Step-by-step: external table (readable) then copy to a native table

This section outlines a robust workflow that starts with a read-only external table linked to a Google Sheet, followed by materializing data into a standard BigQuery table. This preserves the Sheet as the source of truth while enabling fast analytics in BigQuery.

- Create a BigQuery dataset to hold your external reference and final tables. This keeps data organized and access-controlled. Pro tip: name the dataset clearly to reflect the source (e.g., sheet_source).

- Tip: Plan column types ahead to minimize type conversion issues when materializing to a native table.

- In BigQuery, add a new external table linked to your Google Sheet. You will specify the Sheet URL, the sheet range, and the header row usage. This allows querying the sheet without duplicating data.

- Tip: Share the Sheet with the Google service account used by BigQuery to avoid access errors.

- Test a simple SELECT to ensure that the external table reads correctly. Validate basic data types and a few rows for formatting consistency.

- Pro-tip: Use LIMIT to keep test queries fast while debugging.

- Create a native BigQuery table by copying data from the external table (CREATE TABLE AS SELECT). This step materializes the data for faster analytics and independent access from the original Sheet.

- Tip: Consider partitioning or clustering if the sheet contains time-series or large volumes.

- Schedule refresh logic if you expect Sheet updates. Options include Apps Script triggers or a scheduled query that re-populates the native table on a cadence.

- Warning: When refreshing, ensure you handle potential data type changes or schema drift gracefully.

- Validate data quality after each refresh. Re-check row counts, key fields, and any computed columns to ensure the native table mirrors the Sheet data.

- Pro-tip: Maintain a simple audit column (e.g., last_updated) to track refresh activity.

- Document the workflow for future maintenance. Include steps, required permissions, and troubleshooting tips so teammates can reproduce or adjust the integration.

- Tip: Attach links to the Sheet and the BigQuery resources in the documentation for quick access.

Step-by-step: Apps Script approach (automated pushes)

Apps Script can push new rows from Sheets to BigQuery, enabling near real-time updates. This method is ideal when Sheet entries are added incrementally and you want immediate availability in BigQuery.

- Enable the BigQuery API and add the BigQuery Advanced Service to your Apps Script project. This allows code-based interaction with BigQuery from Sheets.

- Tip: Use a service account with restricted permissions to minimize risk.

- Create a small trigger-based script that reads new rows from the Sheet and uses BigQuery.insertAll or a streaming insert to append rows to a native BigQuery table.

- Pro-tip: Maintain a state marker (e.g., last_seen_row) to avoid duplicate inserts.

- Map Sheet columns to BigQuery schema accurately to prevent type mismatches.

- Warning: Large Sheets may require batching and error handling to avoid quota limits.

- Implement error handling and logging so failures are visible and recoverable.

- Tip: Send notifications if a batch fails to ensure timely remediation.

- Schedule or trigger updates according to your data cadence. If Sheet data grows quickly, consider a streaming approach rather than full-table reloads.

- Pro-tip: Complement streaming with periodic reconciliations to catch drift.

- Monitor performance and adjust quotas as needed. Streaming inserts have different cost and quota profiles than batch loads.

- Note: Keep track of query performance as you scale.

- Document the Apps Script solution for future maintenance. Include deployment steps, permissions, and rollback procedures.

- Tip: Version-control your Apps Script project with a clear changelog.

Step-by-step: CSV export + Cloud Storage + BigQuery load (alternative)

This route is reliable when Sheets are large or have complex formats. Export Sheet data as CSV, upload to Google Cloud Storage, and load into BigQuery using a standard LOAD JOB.

- Export the Google Sheet as CSV. Ensure the header row is included so BigQuery can infer the schema.

- Tip: Use a consistent export range to avoid schema drift.

- Upload the CSV to a GCS bucket with appropriate permissions. Store it in a well-organized path structure.

- Pro-tip: Enable object versioning to track changes.

- Create a BigQuery load job to read the CSV into a native table. Define the schema or allow BigQuery to auto-detect with caution.

- Warning: CSVs can lead to subtle type misinterpretations if formats aren’t consistent.

- Validate the loaded data and set up a schedule if updates are periodic.

- Tip: Use a staging table before funneling into a production table to catch issues early.

- Consider cleaning steps in BigQuery (e.g., trimming strings, handling nulls) as part of the load process.

- Pro-tip: Maintain a transformation script to apply consistent data quality rules.

- Automate the end-to-end flow with a Cloud Composer or Cloud Functions trigger when the Sheet updates.

- Note: This method introduces additional moving parts but provides robust versioning and auditing.

Tips for successful Sheets-to-BigQuery workflows

- Ensure data quality at the source: clean, consistent headers and data types before loading. This reduces churn later.

- Use clear schema mapping between Sheets and BigQuery to avoid type mismatches during materialization.

- Prefer an external table first when you need live access to Sheets data without duplicating storage in BigQuery.

- Implement automated validation checks after each load to catch drift or missing rows early.

- Document everything and maintain access controls to protect sensitive data.

- Consider data governance: versioning, data lineage, and access auditing to meet compliance needs.

Tools & Materials

- Google Sheet with structured data(Headers in the first row, consistent data types per column)

- Google Cloud Project with BigQuery enabled(Dataset ready for external table and final tables)

- BigQuery dataset(Create separate dataset(s) for source/external and final tables)

- Sheet sharing permissions(Sheet accessible to the service account or user performing the integration)

- Google Cloud Storage bucket (for CSV method)(Only if using the CSV export path)

- Apps Script project (for automation)(Enable BigQuery Advanced Service if using this path)

- Browser with Google account access(For UI-based steps in BigQuery and Sheets)

Steps

Estimated time: 60-120 minutes

- 1

Prepare the Google Sheet for integration

Clean headers, ensure consistent data types, and remove extraneous formatting. This minimizes schema drift when moved to BigQuery.

Tip: Use a single header row and avoid merged cells in the data range. - 2

Create a BigQuery dataset and target table

Set up a dataset and a destination table (or staging table) to hold the loaded data. Define a clear naming convention.

Tip: Name the target table to reflect the source and purpose. - 3

Add an external table linked to the Sheet

In BigQuery, create an external table pointing to the Google Sheet URL and specify the range and header usage.

Tip: Share the Sheet with the service account used by BigQuery. - 4

Test the external table query

Run a simple SELECT to verify that data from the Sheet is readable and correctly typed.

Tip: Use LIMIT to speed up tests. - 5

Materialize data into a native BigQuery table

Run a CREATE TABLE AS SELECT from the external table to copy data into a standard BigQuery table.

Tip: Optionally apply initial transformations during this step. - 6

Set up an update/refresh approach

Choose between scheduled queries or Apps Script to refresh the native table when the Sheet changes.

Tip: Prefer incremental refresh to minimize costs. - 7

Optionally automate updates with Apps Script

If real-time updates are needed, implement a script that pushes new rows to BigQuery using the API.

Tip: Handle errors gracefully and add retry logic. - 8

Validate data and document the workflow

Run data quality checks, verify row counts, and document permissions, sources, and refresh cadence.

Tip: Maintain a changelog and reference links to the Sheet and BigQuery resources. - 9

Monitor performance and governance

Track query performance, storage costs, and access controls. Adjust schemas as data evolves.

Tip: Set up alerts for failures or anomalies.

FAQ

Can I query a Google Sheet directly in BigQuery without copying data?

Yes. Create an external table in BigQuery that references the Google Sheet. You can read the data, and optionally materialize it into a native BigQuery table using a CREATE TABLE AS SELECT statement.

Yes. You can set up an external table in BigQuery that reads directly from a Google Sheet, and you can copy it into a native table as needed.

Is the external table Read-Only, or can I write back to Google Sheets?

External tables are read-only sources. You can query the Sheet, but any write-back to Sheets from BigQuery would require a separate process (Apps Script or Sheets API).

External tables let you read Sheets data in BigQuery, but to update Sheets you’d need Apps Script or Sheets API.

How fresh is the data when using external tables?

Freshness depends on how you source data. External tables reflect the Sheet at query time; to keep BigQuery in sync, you must refresh the materialized table on a schedule or trigger updates after changes in Sheets.

Freshness depends on your refresh strategy. External tables read live from the sheet, but materialized tables require a refresh.

What are common data type issues when moving from Sheets to BigQuery?

Sheets stores data as text or numbers; you may need to cast or coerce types during materialization to match BigQuery schema (e.g., dates, timestamps). Validate types during the initial load and adjust as needed.

Common issues are mismatched text vs. number types; cast data during load to BigQuery types.

Can I automate backups of Sheet data to BigQuery?

Yes. Use a scheduled process (Apps Script, Cloud Functions, or scheduled queries) to push or copy data from Sheets to BigQuery on a regular cadence.

You can automate backups with scheduled scripts or data transfers.

What permissions are required to set this up safely?

You need access to the Google Sheet (view/edit as required) and BigQuery permissions to create datasets, external tables, and run load jobs. Use least-privilege service accounts where possible.

You’ll need Sheet access and BigQuery permissions with least-privilege service accounts.

Watch Video

The Essentials

- Link Sheets data into BigQuery for scalable analytics

- Choose external table + materialization for live access + fast queries

- Automate refreshes to keep data current

- Validate data quality after every load

- Document the workflow for team continuity