Compare Google Sheets for Duplicates: A Practical Step-by-Step Guide

Learn how to compare Google Sheets for duplicates using built-in tools, formulas, and workflows. This practical guide covers conditional formatting, COUNTIF/COUNTIFS, UNIQUE, and cross-sheet checks to keep data clean and reliable.

You will learn how to compare Google Sheets for duplicates across columns or entire sheets, using built-in functions like conditional formatting, formulas (COUNTIF/COUNTIFS, FILTER, UNIQUE), and add-on tools. You'll compare data ranges, identify exact vs. partial matches, and create a reusable workflow. This guide covers setup, step-by-step methods, and pitfalls to avoid.

What duplicates are and why they matter in data quality

Duplicates can distort analyses, mislead decisions, and inflate counts in Google Sheets. When two or more rows or cells share identical values, downstream calculations—such as pivot tables, charts, and summary metrics—may become biased or misleading. Understanding how to compare across ranges, columns, or even multiple sheets helps ensure data integrity and reliable results. This section explains why deduplication matters for students, professionals, and small business owners who rely on Sheets for reporting, budgeting, or inventory tracking. By recognizing where duplicates arise (entry errors, imports, or consolidated lists) you can design a repeatable workflow that minimizes manual checks and speeds up cleanup. In practice, you’ll balance strict exact matching with sensible tolerance for minor variations, depending on your dataset and goals.

Quick methods at a glance: when to use what

Here is a compact map of the most common approaches, so you can choose the right tool for the job:

- Conditional formatting: visually spot duplicates as you review data.

- COUNTIF/COUNTIFS: count occurrences and flag rows that exceed a threshold.

- UNIQUE with FILTER: create a deduplicated view or extract duplicates into a separate sheet.

- Cross-sheet comparisons: check across multiple ranges or sheets for comprehensive cleanup.

- Case sensitivity and exact vs partial matching: decide if you need strict matches or normalized comparisons (e.g., trim spaces, convert to lowercase).

Method 1: Conditional formatting to highlight duplicates

Conditional formatting is often the fastest way to scan for duplicates. Select the range you want to check, and apply a custom formula like =COUNTIF($A$1:$A$1000, A1) > 1 to highlight cells that appear more than once. You can extend this to entire rows or multiple columns by anchoring the ranges appropriately. When highlighting across columns, consider using a helper column to combine the key fields first (e.g., =A2 & "||" & B2) and then run the duplicate check on the combined key. Pros:

- Quick visual cues

- No permanent data changes Cons:

- Not a standalone audit; duplicates still exist unless you extract them.

Method 2: COUNTIF/COUNTIFS for counting duplicates

COUNTIF counts occurrences of specific values, while COUNTIFS extends this to multiple criteria. A common pattern is to add a helper column with =COUNTIF($A$2:$A$1000, A2) to flag duplicates in column A. For multi-field duplicate checks, use =IF(COUNTIFS($A$2:$A$1000, A2, $B$2:$B$1000, B2) > 1, "dup", "") to flag rows that match on both fields. You can then filter or sort by the helper column to review duplicates. Tips:

- Normalize data first (trim, lowercase) to reduce false positives

- Use absolute references to keep ranges stable when copying formulas.

Method 3: Using UNIQUE and FILTER to isolate duplicates

UNIQUE removes duplicates and keeps only unique rows, which helps you build a deduplicated view. To isolate duplicates, you can use a combination of FILTER and COUNTIFS: =FILTER(A2:C1000, COUNTIFS(A2:A1000, A2:A1000, B2:B1000, B2:B1000) > 1). This returns only rows that have duplicates based on selected key columns. Use a separate sheet for the extracted duplicates to keep your original data intact. Benefits include clean post-cleanup data and simplified audits.

Method 4: Cross-sheet comparison and 3D references

When duplicates span multiple sheets (e.g., weekly lists), you can combine ranges using array literals or auxiliary helper sheets. Example approach: create a master key column that consolidates fields across sheets, or use a formula like =QUERY({Sheet1!A2:A, Sheet2!A2:A}, "select Col1, count(Col1) where Col1 is not null group by Col1 having count(Col1) > 1", 0) to identify duplicates across sheets. For large workbooks, break comparisons into smaller chunks to keep calculations responsive. Consider naming your data ranges (named ranges) to simplify references.

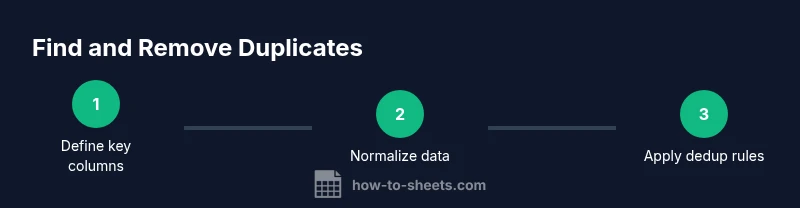

Practical workflow: building a reusable deduplication template

A practical template combines the methods above into a repeatable workflow. Start by defining the scope: which columns constitute a duplicate key? Create a helper column to generate a canonical key (normalized text, trimmed, lowercased). Apply conditional formatting to visualize duplicates, then use COUNTIFS to flag or extract duplicates. Save this as a template sheet to reuse on new datasets, and document each step so teammates can follow. This approach keeps your data clean across projects and time.

Common pitfalls and troubleshooting

Common issues include misinterpreting partial matches as duplicates, failing to trim spaces or normalize case, and applying duplicate checks to blank rows. Always back up data before performing bulk deduplication. For very large datasets, formulas can become slow; consider using add-ons or breaking data into chunks. Finally, verify results by spot-checking flagged rows and comparing before/after counts to ensure no unintended data loss.

Tools & Materials

- Google account with access to Google Sheets(Needed to create, edit, and share sheets used for deduplication tasks.)

- Sample dataset in Google Sheets(Range to test duplicates; include a mix of text and numbers.)

- Backup/export option (CSV or Excel)(Before heavy deduplication, export a copy for safety.)

- Optional add-ons (Power Tools for Sheets or similar)(Useful for large datasets or advanced dedupe tools.)

- Dedicated template sheet for recurring work(Reuse steps for future datasets to save time.)

Steps

Estimated time: 30-60 minutes

- 1

Define the scope and key columns

Identify which columns form the duplicate key (for example, CombinedName and Date). If needed, create a helper column that concatenates these fields into a key. This establishes a consistent basis for detection and reduces false positives.

Tip: Document the chosen key structure so teammates apply the same logic in new datasets. - 2

Create a canonical key (normalize data)

In a new helper column, apply normalization steps such as TRIM, LOWER (or UPPER), and remove non-printable characters. Example: =LOWER(TRIM(A2)) & "||" & LOWER(TRIM(B2)). This ensures duplicates aren’t hidden by minor formatting differences.

Tip: Use absolute references for the normalization ranges so you can copy the formula down safely. - 3

Highlight duplicates with conditional formatting

Apply a conditional formatting rule to the key column using a formula like =COUNTIF($C$2:$C$1000, C2) > 1. This visually flags duplicates for quick review. Extend to additional columns if needed by creating a combined key.

Tip: Set a distinct color scheme to avoid confusion with other highlights in your sheet. - 4

Flag duplicates with a helper column

In a new column, use COUNTIFS to mark duplicates, e.g., =IF(COUNTIFS($C$2:$C$1000, C2) > 1, "dup", ""). Filter or sort by this column to inspect and remove duplicates safely.

Tip: Sort by the helper column first to focus on duplicates before any deletion. - 5

Extract duplicates or create a deduplicated view

Use FILTER in combination with COUNTIFS to extract rows that are duplicates into a new sheet, or use UNIQUE to produce a deduplicated view. This preserves the original data while enabling review and cleanup.

Tip: Keep the extracted duplicates in a clearly labeled sheet to avoid accidental deletion from the original data. - 6

Cross-sheet checks and validation

If duplicates appear across multiple sheets, consolidate using array formulas or create a master key across sheets. Validate results by spot-checking a sample of flagged rows against the source data.

Tip: Use named ranges to simplify cross-sheet references and reduce errors.

FAQ

What is the difference between exact duplicates and duplicates that are similar?

Exact duplicates have identical values in the key fields; similar duplicates share most fields but differ in one or more. Normalize text (trim spaces, convert to lowercase) to improve detection and reduce false positives.

Exact duplicates are exact copies in the key fields; similar duplicates may only partly match. Normalize text to ensure consistent detection.

Can I compare duplicates across multiple sheets in Google Sheets?

Yes. You can create a master key by combining fields across sheets or use 3D-style references and array formulas to identify duplicates across ranges. Break large comparisons into manageable chunks for performance.

You can compare across sheets by consolidating keys or using array formulas; handle large data in parts to stay responsive.

How do I handle case sensitivity when checking for duplicates?

Normalize by applying LOWER or UPPER to the key fields and trim spaces with TRIM. This ensures that 'Apple' and 'apple' are treated as the same duplicate.

Normalize case and trim spaces so duplicates aren’t missed due to capitalization or extra spaces.

What are performance considerations for large datasets?

Large datasets can slow formulas. Use helper columns, avoid volatile functions, and consider splitting data into chunks or using add-ons to optimize performance.

For big data, break the task into smaller parts and use helper columns to maintain speed.

Should I deduplicate after identifying duplicates?

Yes. Validate flagged duplicates, back up your data, and then remove or consolidate duplicates as needed. A deduplicated dataset is easier to audit and trust.

Only deduplicate after careful review and backing up your data.

Can I automate duplicate checks for new data entries?

Yes, you can set up templates with formulas and conditional formatting that automatically apply to new data. Consider using Apps Script or add-ons for advanced automation.

Automate checks with templates or scripts so new data is continuously validated.

Watch Video

The Essentials

- Identify a clear duplicate key to ensure accurate detection

- Use normalization to reduce false positives

- Combine conditional formatting with formulas for robust deduping

- Extract duplicates separately to protect original data

- Document steps to enable repeatable workflows