Most Common Text in Google Sheets: Find, Count, and Clean Data

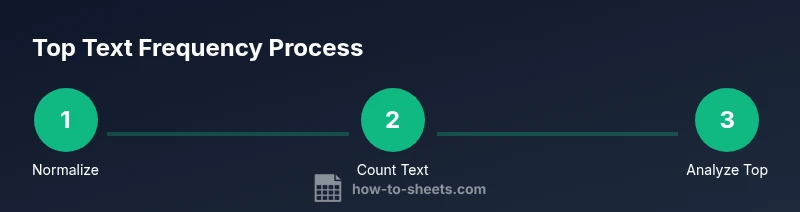

Learn how to identify the most common text in a Google Sheets range with practical, step-by-step formulas and methods. Normalize text, count occurrences, and surface top values for clean, reliable data analysis.

This guide helps you identify the most common text in a Google Sheets range by normalizing text (lowercase and trimming), counting occurrences, and surfacing the top entries. You’ll compare methods like QUERY-based frequency analysis and pivot-table approaches, with practical tips for handling case, blanks, and ties.

What is the most common text in Google Sheets?

In data work, the phrase "most common text" refers to the text value that appears most frequently within a specified range. In Google Sheets, you can determine this even when data varies in case or contains extra spaces, by standardizing those variations first. According to How To Sheets, identifying the true top string is a foundational data-cleaning step that makes downstream analysis more reliable. Recognizing the most common text helps with deduplication, categorization, and quick quality checks across columns or whole sheets. When your data is consistent, you can trust the frequency results and reuse this workflow in future datasets.

This definition matters whether you’re tracking customer names, product categories, or survey responses. A robust approach minimizes miscounts caused by simple inconsistencies and provides a clear view of prevailing terms across datasets.

Why identifying the most common text matters

The ability to find the most frequent text plays a critical role in data cleaning and quality assurance. When text values are inconsistent, frequencies can be distorted, leading to misinterpretations or faulty dashboards. Cleaning processes—like converting to lowercase, trimming spaces, and standardizing punctuation—help ensure that words like "Apple", "apple", and " APPLE " are counted as the same entry. By surfacing the top strings, you can prioritize corrections, design better drop-down lists, and improve automated categorization. How To Sheets’ analyses show that standardizing text reduces duplicates and speeds up analysis, especially in large datasets. This workflow also scales to multiple ranges and sheets, making recurring tasks faster and more accurate.

Core tools and functions for text frequency

To find the most common text in Google Sheets, you’ll rely on a small toolkit of built-in functions that handle text normalization and counting. Key functions include LOWER() to normalize case, TRIM() to remove extraneous spaces, and UNIQUE() to list distinct values. COUNTIF() counts occurrences of each distinct value, and QUERY() can group, count, and sort results in a single, readable formula. ARRAYFORMULA() lets you apply these operations across entire columns without dragging formulas. For larger datasets, you can pair these with SORTN() to extract the top N results. Together, these tools enable a compact, repeatable solution that works across many datasets.

Method A: Using a frequency query (LOWER, TRIM, and QUERY)

A reliable way to surface the most common text is to normalize text and then group by the normalized value using QUERY. Try this approach as a starting point (assumes data in A2:A, with A1 as header or data starting in A2):

=INDEX(QUERY(ARRAYFORMULA(TRIM(LOWER(A2:A))),

"select Col1, count(Col1) where Col1<>'' group by Col1 order by count(Col1) desc" , 0), 1, 1)

This returns the top text after normalization. If you want the top count as well, use the same QUERY result and extract column 2. Remember to adjust A2:A to your actual data range. The key is TRIM and LOWER to collapse variations like extra spaces and mixed-case entries into a single, comparable form.

Method B: Pivot tables and dashboards for large datasets

When datasets grow large, a pivot table offers a hands-off way to see the frequency of text values. Create a pivot with Rows set to the text column (normalized with a helper column if needed), and Values set to Count of that column. This approach is intuitive, scalable, and lets you add slicers or filters to explore top texts by category or time period. While pivot tables are not formula-driven, they provide a quick, maintainable method for frequent analyses and can be refreshed with a single click.

Handling whitespace, case, and blanks

Whitespace and case differences are among the most common reasons for miscounts. Always trim spaces and standardize case before counting. Exclude blank cells from your analysis to avoid skewed results. A practical pattern is to build a normalized helper column (for example, in column B): =TRIM(LOWER(A2)) and then perform frequency analysis on that helper column. This reduces the chance of duplicate entries like "Apple" and "apple" being counted separately.

Practical example: data in A2:A100

Suppose your dataset contains text entries in A2:A100 (names, labels, categories). Step by step, you would:

- Normalize: In B2, enter

=TRIM(LOWER(A2))and fill down to B100. - Frequency list: In C2, enter a frequency formula such as:

=QUERY(B2:B100, "select Col1, count(Col1) where Col1<>'' group by Col1 order by count(Col1) desc", 0) - Top result: The first row of the QUERY output (Col1) is the most common text; you can also grab the count from the second column.

- Tie handling: If two texts have the same count, use a method like SORTN to list the top 2 or use a secondary sort by the text value to surface all ties.

- Verification: Manually spot-check a few entries to ensure normalization captured all variants (e.g., removing punctuation or diacritics if relevant).

These steps give you a repeatable process that scales from a single column to multiple ranges, and they translate well to scripts for automation.

Authority sources

For readers who want to explore best practices in data cleaning and text analysis, consult established sources on data management and analysis:

- https://www.census.gov

- https://www.harvard.edu

- https://www.nature.com

Tools & Materials

- Google Sheets access(A sheet where you will analyze text data (single column is fine).)

- Sample dataset of text values(Place data in column A, starting from A2 (A1 can be a header).)

- Web browser(To access Google Sheets and follow along with examples.)

- Pivot table (optional)(Use if you prefer a GUI-based frequency view for large datasets.)

Steps

Estimated time: 15-25 minutes

- 1

Open your dataset

Open the Google Sheet that contains the text data you want to analyze. Locate the target column (for example column A) and confirm there are no unexpected non-text values in the range you’ll analyze.

Tip: If your data has a header, start from the first data row (e.g., A2). - 2

Create a normalized helper column

In a new column (e.g., B), apply text normalization to each cell: =TRIM(LOWER(A2)). Copy down to cover all data. This collapses spaces and converts to lowercase so similar texts match.

Tip: Use an array formula if you want to fill the entire column automatically: =ARRAYFORMULA(TRIM(LOWER(A2:A))) - 3

Generate a frequency list with QUERY

In a new area, run a frequency query on the normalized column to group by text and count occurrences. Example: =QUERY( B2:B100, "select Col1, count(Col1) where Col1<>'' group by Col1 order by count(Col1) desc", 0).

Tip: This returns a simple list of text and their counts, sorted from most frequent to least. - 4

Extract the top text

From the QUERY results, pick the first row to identify the most common text. If you also want the count, reference the second column of the same row.

Tip: If multiple texts tie for top frequency, you’ll see the same count across several rows; plan to surface multiple top values. - 5

Handle ties and blanks

If you expect ties, use a second sort (e.g., by the text value) or extract the top N rows with SORTN. Ensure blanks are excluded by filtering out empty strings in your query.

Tip: Always verify that blanks in your data aren’t being treated as a text value. - 6

Validate and reuse

Save the normalized approach as a template or a small script so you can reproduce the result on new datasets with minimal edits.

Tip: Document the range and any assumptions (headers, data type) for future reuse.

FAQ

What is the most common text in Google Sheets?

The most common text is the string that appears most often in a given range after normalizing for case and extra spaces. This helps ensure accurate frequency counts across the dataset.

The most common text is the string that appears the most after standardizing case and spaces.

How do I handle case sensitivity when counting?

Convert all text to lowercase (or uppercase) before counting, using functions like LOWER() and TRIM(). This ensures equivalent text is counted as a single entry.

Make everything lowercase first, then count to avoid case-based duplicates.

What if two texts share the top frequency?

If there are ties, surface the top N results by expanding the query or using SORTN to display all items with the highest count.

If there’s a tie, show all top texts with the same highest count.

Can I automate this in Apps Script?

Yes. Apps Script can run the normalization and frequency steps across multiple ranges and return the top texts in a dedicated summary sheet.

You can automate it with Apps Script for repeated analyses.

Is this approach scalable for large datasets?

Pivot tables or server-side data processing can handle large datasets efficiently. For formulas, test on a subset first and optimize ranges.

Pivot tables scale well; for formulas, test on smaller samples before expanding.

What about numbers or dates in the text column?

Treat numbers or dates as text if needed by converting with TEXT() or by ensuring the input is formatted consistently before counting.

Consider formatting consistency if your data mixes numbers, dates, and text.

Watch Video

The Essentials

- Identify the top text by normalizing and counting

- Use QUERY with LOWER and TRIM for readable results

- Pivot tables offer a GUI alternative for large datasets

- Handle ties by listing all top contenders

- Automate the workflow for repeatable analysis